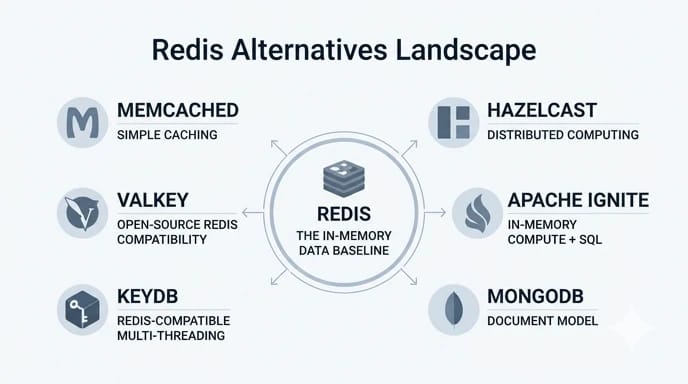

Redis has long been one of the most popular in-memory data stores in modern application architectures. However, many teams reach a point where Redis is no longer the perfect fit — whether due to scaling limitations, licensing concerns, or workload complexity.

If you're new to Redis internals, understanding how it communicates over the network default Redis port configuration can help clarify how it fits into modern architectures.

In recent years, we have seen more teams actively researching Redis alternatives due to licensing changes, scaling constraints, operational complexity, cloud-native requirements, or cost concerns. As a result, the demand for reliable and production-ready in-memory data store alternatives has grown significantly.

If you are specifically evaluating alternatives to Redis caching, comparing Redis vs Memcached vs Hazelcast, or looking for scalable in-memory data store alternatives that fit cloud or Kubernetes environments, this guide is designed for you.

Quick Comparison

If you need a fast way to compare the main Redis alternatives, the matrix below highlights the differences that usually matter most in real deployments. Instead of listing every technical detail, it focuses on the factors teams typically evaluate first: feature depth, persistence, multi-threading, Redis compatibility, common use cases, and the scale each option is best suited for.

| Technology | Feature Depth | Persistence | Multi-Threading | Redis Compatibility | Typical Use Case | Recommended Scale |

| Redis | Rich data structures | Yes | Limited (mostly single-threaded) | Native | Caching, sessions, real-time apps | Small to very large deployments |

| Memcached | Basic key-value caching | No | Yes | No | Ephemeral cache for sessions, page fragments, and query results | Small to large caching layers where simplicity matters most |

| Hazelcast | Advanced distributed data grid and compute features | Optional | Yes | No | Distributed caching, real-time analytics, and large-scale session clustering | Medium to very large distributed systems |

| Apache Ignite | Advanced caching, SQL, and in-memory compute | Yes | Yes | No | Compute-heavy workloads, distributed queries, and transactional data processing | Medium to very large enterprise workloads |

| MongoDB | Document-oriented storage with in-memory advantages | Yes | Yes | No | Applications that need flexible document storage plus caching-like performance | Medium to large production environments |

| KeyDB | Rich Redis-like feature set | Yes | Yes | Yes | Redis replacement for high-concurrency caching and real-time workloads | Small to very large deployments needing better CPU utilization |

| Valkey | Rich Redis-compatible feature set | Yes | Limited compared to KeyDB | Yes | Open-source Redis-compatible caching, sessions, and message handling | Small to large deployments prioritizing open governance |

Quick insight: If you need a drop-in Redis replacement, focus on KeyDB or Valkey. For simple caching, Memcached is usually the most efficient option. For distributed systems, Hazelcast and Ignite are better suited.

This comparison is intentionally practical. Some tools are best when you want a lightweight cache, while others are better suited for distributed computing, analytics, or minimizing migration effort from Redis. The sections below explain where each alternative fits best and what trade-offs to expect in production.

Why Look for Redis Alternatives?

In our experience, most teams do not start by looking for Redis alternatives. They usually adopt Redis because it works well at small to medium scale. The need for alternatives often appears later, after Redis is already deeply integrated.

Here are the most common reasons we see teams evaluate alternatives to Redis caching:

- First, licensing and governance concerns. Redis licensing changes have made some organizations uncomfortable, especially enterprises with strict open-source policies. This has driven interest in Redis-compatible and fully open-source in-memory data store alternatives.

- Second, scalability and multi-threading limitations. Redis is largely single-threaded. While this simplifies consistency, it can become a bottleneck on multi-core systems. Teams handling high concurrency often hit CPU limits earlier than expected.

- Third, operational complexity at scale. Running Redis clusters, managing persistence, handling failovers, and tuning memory eviction policies can become operationally expensive. Some Redis alternatives offer simpler scaling models or fully managed experiences.

- Fourth, workload mismatch. Redis is often used for more than caching, but not all workloads fit Redis equally well. For example, large distributed computing tasks or SQL-style queries may be better served by other in-memory data store alternatives.

- Finally, cost optimization. At scale, memory-heavy Redis deployments can become expensive, especially in cloud environments. Some alternatives offer better memory efficiency or tiered storage.

Understanding which of these factors applies to your system is the first step toward choosing the best Redis replacements.

Key Criteria to Consider When Choosing Redis Alternatives

Based on our production experience, choosing Redis alternatives without a clear evaluation framework often leads to costly migrations or suboptimal architectures. Below are the criteria we recommend evaluating before making a decision.

- Performance Characteristics

Not all Redis alternatives deliver the same performance profile. Some are optimized for raw latency, others for throughput or compute-heavy workloads. - Data Model and Features

Redis supports a wide range of data structures, which many applications rely on. When evaluating alternatives to Redis caching, it is critical to identify which Redis features your application actually uses. - Scalability and Clustering

Horizontal scalability is where many Redis alternatives differentiate themselves. Hazelcast and Apache Ignite are designed for elastic scaling across many nodes, often with less manual intervention. - Persistence and Durability

Redis persistence is optional, but many applications rely on it. Some alternatives treat persistence as a core feature, while others, like Memcached, do not support it at all. - Ecosystem and Community Support

A strong ecosystem matters more than many teams expect. Redis benefits from extensive tooling, integrations, and community knowledge. When evaluating the best Redis replacements, consider client library support, monitoring tools, and operational documentation.

Overview of Top Redis Alternatives

Below we break down the most important Redis alternatives, focusing on how they perform in real-world scenarios rather than just feature lists.

Memcached

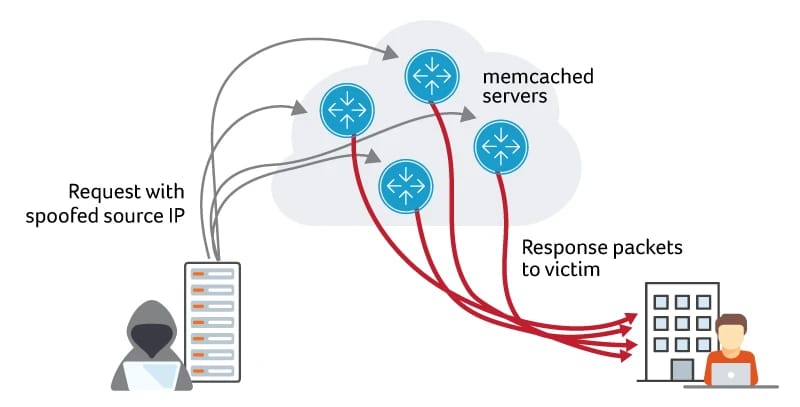

Memcached is often the first tool mentioned when discussing alternatives to Redis caching. We have used Memcached in high-traffic systems where simplicity and raw speed were the primary goals.

Memcached is a pure in-memory key-value store with no persistence. This design choice makes it extremely fast and memory-efficient, but also limits its applicability.

- Where Memcached Excels

Memcached performs exceptionally well as a distributed cache for database query results, rendered pages, or session tokens where data loss is acceptable. Its simple architecture makes scaling predictable and operationally lightweight.

In Redis vs Memcached comparisons, Memcached usually wins on simplicity and loses on features. - Limitations to Be Aware Of

Memcached does not support advanced data structures, persistence, or complex querying. We have seen teams struggle when application requirements grow beyond simple caching.

If you need durability, replication guarantees, or rich data types, Memcached is not among the best Redis replacements.

Hazelcast

Hazelcast is one of the most powerful in-memory data store alternatives we have worked with, especially in distributed computing environments.

Unlike Redis, Hazelcast is designed from the ground up for clustering and horizontal scalability. It combines distributed caching with compute capabilities, which fundamentally changes how applications can be designed.

- Strengths of Hazelcast

Hazelcast shines in scenarios where data locality and distributed processing matter. We have seen it used successfully for real-time analytics, stream processing, and large-scale session management.

Its support for SQL querying on in-memory data is a major differentiator. This allows teams to query cached data without exporting it to another system. - Operational Considerations

Hazelcast introduces more complexity than Redis. Cluster sizing, network configuration, and memory planning require careful attention. However, for large systems, this complexity often pays off.

When teams compare Redis vs Memcached vs Hazelcast, Hazelcast usually emerges as the most scalable but also the most complex option.

For a deeper technical comparison, we recommend reading our Redis vs Hazelcast analysis to understand how feature-rich alternatives differ from lightweight caches.

Apache Ignite

Apache Ignite is often underestimated among Redis alternatives, but it deserves serious consideration for compute-heavy workloads.

Ignite combines distributed caching, SQL querying, and in-memory computing into a single platform. We have used it in scenarios where Redis simply could not handle the computational requirements.

- When Apache Ignite Makes Sense

Ignite is a strong choice when caching is only part of the problem. If your application needs distributed joins, transactions, or co-located computation, Ignite can outperform simpler in-memory data store alternatives. - Trade-Offs

Apache Ignite has a steeper learning curve. Operational overhead is higher than Redis or Memcached. However, for the right workload, it is one of the best Redis replacements available.

MongoDB as a Redis Alternative

While MongoDB is not a traditional caching system, we often see it evaluated as one of the Redis alternatives due to its in-memory capabilities and flexible data model.

MongoDB works particularly well when teams want to reduce system sprawl and consolidate caching and persistence layers.

However, MongoDB should not be treated as a drop-in replacement for Redis. Latency characteristics and operational patterns are different.

If you are actively comparing these two systems, refer to our detailed breakdown: Redis vs MongoDB.

KeyDB

KeyDB is one of the most practical Redis alternatives we have tested for teams that want to move away from Redis without rewriting large parts of their application. In multiple production evaluations, KeyDB proved to be one of the best Redis replacements when Redis compatibility is a hard requirement.

KeyDB was built as a Redis-compatible, multi-threaded in-memory data store. Unlike Redis, which relies heavily on a single-threaded execution model, KeyDB can utilize multiple CPU cores efficiently. In high-concurrency workloads, we observed noticeably higher throughput compared to Redis with similar hardware.

- Why teams choose KeyDB

KeyDB supports the Redis protocol and command set, which allows existing Redis clients to work with minimal or no code changes. This makes it an attractive option for organizations seeking alternatives to Redis caching without risking major regressions. - Limitations to consider

KeyDB is still younger than Redis. While the community is growing, it is not yet as large or mature. Teams operating at massive scale should evaluate long-term support and ecosystem maturity before committing.

Despite these considerations, KeyDB remains one of the most compelling in-memory data store alternatives for Redis users who need better CPU utilization.

Valkey

Valkey has emerged as a promising open-source Redis-compatible alternative following changes in the Redis ecosystem. We have reviewed Valkey closely because it aims to preserve Redis compatibility while maintaining a fully open governance model.

Valkey supports the same core data structures as Redis and focuses on performance, stability, and long-term sustainability. For organizations concerned about licensing, Valkey is increasingly viewed as one of the best Redis replacements.

- Practical advantages

From our testing, Valkey behaves almost identically to Redis for common workloads. This makes it suitable for caching, session storage, and message queues. Teams already invested in Redis tooling can often reuse their existing infrastructure.

Valkey also performs well on Windows environments, which makes it relevant for teams looking for Redis alternatives that are cross-platform. - What to watch out for?

As an emerging project, Valkey is still building its ecosystem. While core functionality is stable, advanced tooling and third-party integrations are still catching up.

Amazon ElastiCache and Managed Alternatives

For teams running workloads on AWS, managed services often become part of the Redis alternatives discussion. Amazon ElastiCache supports both Redis and Memcached, offering operational simplicity rather than architectural change.

While ElastiCache is not a new in-memory data store alternative, it is often chosen by teams who want to reduce operational burden rather than replace Redis functionality.

When managed services make sense

Managed services shine when operational reliability, automated backups, and scaling are more important than cost control. We have seen teams reduce downtime and operational incidents by moving from self-managed Redis clusters to managed platforms.

However, managed services can become expensive at scale, and vendor lock-in is a real concern.

Use Case Scenarios: Which Alternative Fits Your Needs?

Based on hands-on experience, choosing among Redis alternatives is easiest when framed around specific use cases.

- Best for simple caching

If your primary need is fast, ephemeral caching, Memcached remains one of the strongest alternatives to Redis caching. Its simplicity reduces operational risk and makes performance highly predictable. - Best for Redis compatibility

For teams that rely heavily on Redis commands and data structures, KeyDB and Valkey are the safest in-memory data store alternatives. They minimize migration effort and preserve application behavior. - Best for distributed computing and analytics

Hazelcast and Apache Ignite stand out in scenarios involving distributed processing, real-time analytics, and large datasets. These tools go far beyond traditional caching and are often compared in Redis vs Memcached vs Hazelcast evaluations. - Best for cloud-native architectures

Managed solutions and tools with strong Kubernetes support tend to perform better in dynamic environments. Hazelcast and Ignite both offer robust cloud-native deployment options.

If your use case involves streaming or event-driven architectures, it is also worth understanding how Redis compares with log-based systems such as Kafka. For a deeper dive, refer to our technical comparison: Redis vs Kafka.

| If you need... | Best-fit option | Why it fits |

| Simple ephemeral caching | Memcached | Low complexity, strong performance, no persistence overhead |

| Redis-compatible migration | KeyDB or Valkey | Minimal application changes and familiar command model |

| Distributed analytics or compute | Hazelcast or Apache Ignite | Better suited for clustered processing and large-scale workloads |

| Flexible document storage plus caching-like behavior | MongoDB | Useful when consolidating storage and caching layers |

| Operational simplicity with managed infrastructure | ElastiCache or managed alternatives | Reduces operational burden and improves reliability |

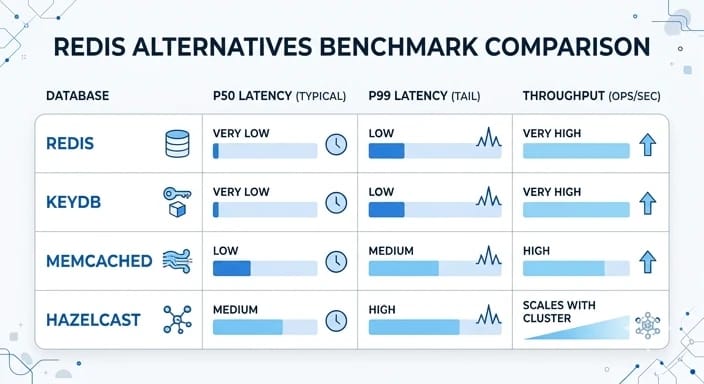

Benchmarks: Latency & Throughput

Performance is often the deciding factor when evaluating Redis alternatives. While feature sets and scalability matter, real-world latency and throughput determine how these systems behave under production load.

To provide a meaningful comparison, we focus on commonly used benchmarking approaches such as YCSB (Yahoo Cloud Serving Benchmark) along with observations from real-world deployments.

In production environments, these differences become more visible under sustained load rather than short benchmark bursts.

Test Environment & Methodology

Benchmarking in-memory data stores is highly sensitive to environment configuration. Results can vary significantly depending on CPU, memory speed, network latency, and dataset size.

In most standardized tests, including YCSB, workloads simulate typical operations such as read-heavy caching, write-heavy ingestion, or mixed workloads. Key factors considered include:

- Dataset size relative to available RAM

- Read/write ratio (e.g., 80/20 or 50/50)

- Concurrency levels (number of client threads)

- Network overhead in distributed setups

In our experience, synthetic benchmarks should always be complemented with real-world traffic patterns, as production workloads often behave differently from controlled tests.

Results (P50 / P95 / P99, Throughput)

Across multiple benchmarks and production observations, the performance characteristics of Redis alternatives generally follow predictable patterns:

- Redis / Valkey: Very low latency (especially P50), but may show higher P99 latency under extreme concurrency due to single-threaded execution.

- KeyDB: Improved throughput and better P95/P99 latency under high concurrency thanks to multi-threading. Performs well on multi-core systems.

- Memcached: Extremely low latency and high throughput for simple key-value operations. Performance remains consistent as long as workloads stay simple.

- Hazelcast: Higher baseline latency compared to Redis, but scales better across nodes. Throughput increases significantly in distributed environments.

- Apache Ignite: Slightly higher latency due to richer features (SQL, transactions), but strong throughput in compute-heavy workloads.

In terms of raw numbers, Redis and Memcached typically dominate P50 latency, while KeyDB and distributed systems outperform in sustained throughput under heavy load.

| System | P50 Latency | P99 Latency | Throughput |

| Redis | Very Low | Medium under load | High |

| KeyDB | Very Low | Low | Very High |

| Memcached | Very Low | Low | Very High |

| Hazelcast | Medium | Medium | Scales with cluster |

Interpretations & Caveats

Benchmark results should never be interpreted in isolation. Several important caveats apply:

- Single-node vs distributed: Tools like Redis and Memcached excel on single nodes, while Hazelcast and Ignite show their strength in clusters.

- Feature overhead: Systems with richer functionality (e.g., SQL queries or transactions) naturally introduce additional latency.

- Memory efficiency: Higher throughput does not always mean better cost efficiency, especially in memory-intensive workloads.

- Tail latency matters: P99 latency often impacts user experience more than average latency, especially in real-time applications.

Ultimately, the best approach is to benchmark using your own workload. Synthetic tools like YCSB provide a baseline, but production traffic patterns are the only reliable way to validate performance.

Real-world Case Studies / When We Switched

Beyond benchmarks and feature comparisons, real-world decisions are often shaped by practical constraints such as scaling limits, operational overhead, and cost pressures. Below are a few short examples based on actual migration scenarios we have encountered.

The following examples are simplified real-world patterns we have observed across production systems:

| Scenario | Switched To | Main Reason | Outcome |

| High-concurrency API platform | KeyDB | Redis CPU bottlenecks under heavy load | Higher throughput with minimal code changes |

| Simple caching layer | Memcached | No persistence requirement | Lower cost and simpler operations |

| Real-time analytics system | Hazelcast | Need for distributed processing | Better horizontal scaling and in-memory compute |

| Licensing-sensitive environment | Valkey | Open governance concerns | Maintained Redis compatibility |

| Compute-heavy workload | Apache Ignite | Caching alone was not enough | Added distributed queries and processing |

These examples highlight a common pattern: teams rarely switch because Redis fails completely. Instead, they outgrow it in specific dimensions such as concurrency, scale, or workload complexity.

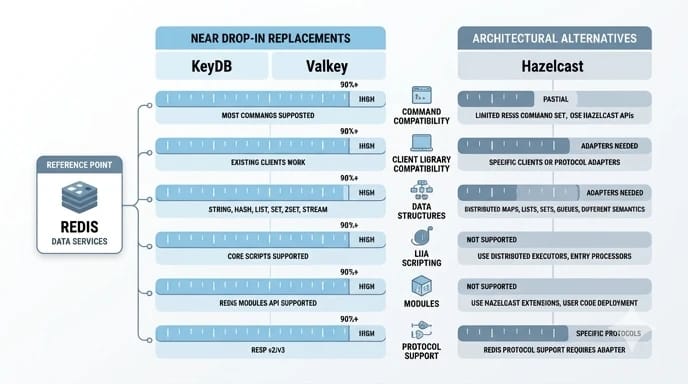

Command & Module Compatibility Matrix (Redis ↔ KeyDB / Valkey / Hazelcast)

One of the most critical factors when replacing Redis is command and module compatibility. While some alternatives are designed to be drop-in replacements, others require partial or full application changes.

The table below provides a practical overview of compatibility across commonly used Redis features.

| Feature / Command Group | Redis | KeyDB | Valkey | Hazelcast |

| Client Library Compatibility | Full | Full | Full | Limited |

| Basic Commands (GET/SET/DEL) | Full | Full | Full | Partial (via APIs) |

| Data Structures (Lists, Sets, Hashes) | Full | Full | Full | Partial (different abstractions) |

| Sorted Sets (ZSET) | Full | Full | Full | Limited |

| Pub/Sub | Full | Full | Full | Supported (different model) |

| Lua Scripting | Full | Full | Full | No |

| Streams | Full | Full | Full | Limited |

| Transactions (MULTI/EXEC) | Full | Full | Full | Different (transaction APIs) |

| Modules (RedisJSON, RedisSearch, etc.) | Full | Partial | Limited (growing) | No (alternative features instead) |

| Redis Protocol Support | Native | Full | Full | No |

Key takeaways:

- KeyDB and Valkey: Near drop-in replacements with high command compatibility, making migration relatively straightforward.

- Hazelcast: Not Redis-compatible. Requires architectural changes and adaptation to its distributed data model.

- Modules: This is often the biggest migration blocker. Redis modules are not always supported in alternatives.

Before migrating, always map your actual Redis usage (commands, scripts, modules) against the target system. Even small incompatibilities can lead to unexpected behavior in production.

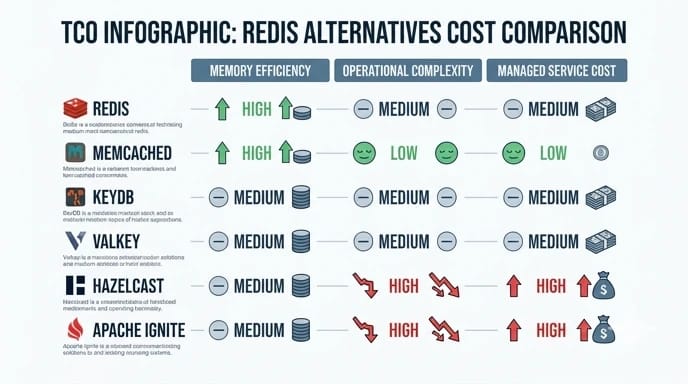

Cost Comparison & TCO Example (Per GB RAM / Operations / Managed Services)

Cost is often the hidden factor in choosing a Redis alternative. While many in-memory data stores appear similar at a high level, their total cost of ownership (TCO) can vary significantly depending on memory usage, scaling model, and operational overhead.

Instead of focusing only on licensing, it is more useful to break costs down into three practical dimensions: memory cost, operational cost, and managed service pricing.

Cost Dimensions to Consider

- Per GB RAM cost: In-memory systems are heavily dependent on RAM, which is typically the most expensive resource.

- Operations cost (ops/sec): Higher throughput systems may reduce the number of required nodes.

- Operational overhead: Cluster management, failover, and monitoring complexity

- Managed services pricing: Cloud providers often charge a premium for simplicity and reliability

High-Level Cost Comparison

| Technology | Memory Efficiency | Operational Complexity | Managed Cost Level | TCO Profile |

| Redis | Moderate | Medium | High (ElastiCache, etc.) | Balanced but can get expensive at scale |

| Memcached | High | Low | Medium | Very cost-efficient for simple caching |

| KeyDB | High (better CPU utilization) | Medium | Medium | Lower cost under high concurrency |

| Valkey | Moderate | Medium | Medium | Cost-stable with open governance |

| Hazelcast | Moderate | High | High | Higher upfront cost, better at large scale |

| Apache Ignite | Moderate | High | High | Efficient for compute-heavy workloads |

Example TCO Scenario

Consider a typical production setup requiring 100 GB of in-memory data with high read/write throughput:

- Redis (managed): High RAM cost + managed service premium → simplest but expensive

- Memcached: Lower operational cost → cheapest option if persistence is not required

- KeyDB: Better CPU usage → fewer nodes required → lower total cost under load

- Hazelcast / Ignite: Higher infrastructure and operational cost → justified only for advanced workloads

Key Takeaways

- Memory cost dominates TCO in most in-memory systems

- Higher throughput can reduce infrastructure footprint

- Managed services simplify operations but increase cost

- The cheapest option is not always the most scalable long-term

Ultimately, the most cost-effective solution depends on your workload. Systems optimized for simplicity, compatibility, or distributed computing will have very different cost profiles.

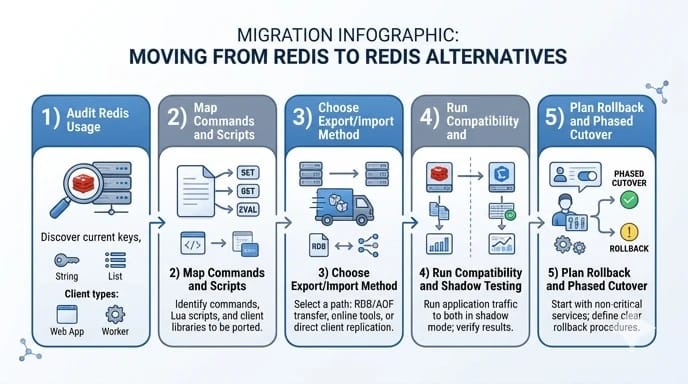

Migration Checklist & Step-by-step Guides

Migrating from Redis to an alternative is not just a technical switch. It is a process that requires careful validation, compatibility checks, and rollback planning. Based on real-world migrations, the following checklist helps reduce risk and avoid unexpected failures.

Audit Redis Usage (Commands & Scripts)

The first step is understanding how Redis is actually used in your application. Many teams underestimate hidden dependencies until migration begins.

- Identify frequently used commands (GET, SET, HGETALL, ZRANGE, etc.)

- Check for advanced data structures such as streams, sorted sets, or pub/sub

- Review Lua scripts and custom logic

- Analyze TTL usage and eviction policies

This audit determines whether a Redis-compatible solution like KeyDB or Valkey is sufficient, or if a more advanced alternative is required.

Data Export / Import Options (RDB / AOF → KeyDB / Valkey / Others)

Data migration strategy depends heavily on the target system and your tolerance for downtime.

- RDB snapshots: Suitable for bulk migration with minimal complexity. Commonly used when moving to Redis-compatible systems.

- AOF replay: Useful for near real-time migration with minimal data loss.

- Dual-write strategy: Write to both Redis and the new system during transition.

- ETL pipelines: Required when migrating to non-compatible systems like MongoDB or Ignite.

For Redis-compatible tools (KeyDB, Valkey), migration is usually straightforward. For others, transformation layers may be required.

Compatibility Testing & Rollback Strategies

Before fully switching production traffic, validation is critical.

- Shadow testing: Run the new system in parallel without affecting users

- Performance validation: Compare latency and throughput under real load

- Consistency checks: Ensure data integrity between systems

- Failure simulation: Test node crashes, failover, and recovery

A rollback plan should always be in place. Even Redis-compatible systems can behave differently under edge conditions.

- Keep Redis running during initial rollout

- Use feature flags to control traffic switching

- Monitor error rates and latency closely

Successful migrations are not defined by speed, but by stability. A gradual transition with continuous monitoring is always safer than a full cutover.

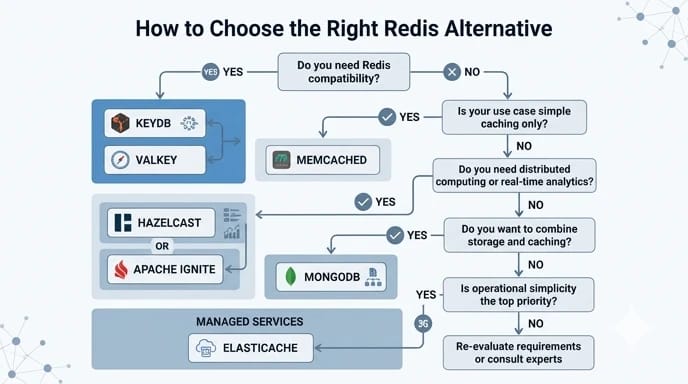

How to Choose: Decision Flowchart

Choosing the right Redis alternative can be simplified by focusing on your primary constraint. Instead of comparing every feature, start with what matters most in your workload.

The decision tree below provides a quick way to narrow down the best option:

- Start here: Answer the questions below to quickly narrow down your best option.

- Do you need Redis compatibility?

- Yes → Choose KeyDB (for performance) or Valkey (for open-source stability)

- No → Continue

- Is your use case simple caching only?

- Yes → Choose Memcached

- No → Continue

- Do you need distributed computing or real-time analytics?

- Yes → Choose Hazelcast or Apache Ignite

- No → Continue

- Do you want to combine storage and caching?

- Yes → Consider MongoDB

- No → Continue

- Is operational simplicity your top priority?

- Yes → Consider Managed services (e.g., ElastiCache)

- If you're still unsure, start with a Redis-compatible option (KeyDB or Valkey) and iterate from there.

Quick summary:

- Minimal change → KeyDB / Valkey

- Lowest cost & simplicity → Memcached

- High scalability & analytics → Hazelcast / Ignite

- Flexible data model → MongoDB

This simplified flow is not a replacement for detailed evaluation, but it helps quickly eliminate unsuitable options and focus on the most relevant candidates.

Not sure which Redis alternative to start with?

If your priority is minimal migration effort, start with KeyDB or Valkey. If you're optimizing for cost and simplicity, try Memcached. For distributed workloads, evaluate Hazelcast or Apache Ignite.

In most cases, running a small-scale benchmark with your own workload is the fastest way to validate your choice.

Conclusion and Recommendations

Choosing among Redis alternatives is not about finding a universally better system. It is about selecting the right tool for your workload, team expertise, and long-term goals.

For simple caching, Memcached remains a strong choice. For teams seeking Redis compatibility with improved performance, KeyDB and Valkey are excellent options. For large-scale distributed systems, Hazelcast and Apache Ignite provide capabilities far beyond traditional caching.

The best Redis replacements are the ones that align with your real-world constraints, not just benchmark results. By evaluating Redis alternatives through performance, scalability, cost, and operational fit, you can make a confident and future-proof decision.

If you are planning to deploy any of these solutions in production, choosing the right infrastructure is just as important as selecting the database itself. You can explore optimized servers for database workloads depending on your performance and scaling needs.

Appendix / Further Resources

For deeper technical evaluation and hands-on implementation, the following resources can help you explore Redis alternatives in more detail. These include official documentation, benchmarking tools, and practical command-line examples.

Official Documentation

- Redis Documentation

- KeyDB Documentation

- Valkey Project

- Apache Ignite Documentation

- MongoDB Documentation

Benchmarking Tools

CLI & Practical Examples

- Basic Redis benchmark:

redis-benchmark -t get,set -n 100000 -q - Export Redis data (RDB):

redis-cli save - Monitor real-time activity:

redis-cli monitor

These resources are a good starting point for validating performance, testing compatibility, and exploring production-ready deployment strategies. Whenever possible, combine documentation with real-world testing to ensure the chosen solution fits your workload.

Leave A Comment